The Google-Samsung AI glasses and Meta Ray-Bans sound remarkably similar, but the Android XR could have three key advantages that will make it worth the wait.

Last week, I tested prototypes of Google’s Android XR, the Gemini-powered foundation for Samsung’s 2026 AI glasses. And while Google director Juston Payne said these AI glasses “will help make this category possible,” Meta brought smart glasses with the XR first. So it’s fair to speculate how Google and Samsung will crack the Meta’s AI glasses.

We don’t know how Warby Parker and Gentle Monster will design these glasses, and we don’t have camera or battery details yet. But the Android XR UI and apps felt polished and consumer-friendly in my hour-long demo. I already know what it will be like to use these glasses in everyday life.

Watch it open

As a result, I expect Samsung’s mirrorless glasses and Ray-Ban Meta glasses to have remarkably similar software, including “live” AI advice and interpretation. The mono-HUD glasses, likewise, will be similar to the Meta Ray-Ban Display glasses, with their widgets for small apps, turn-by-turn navigation, and other shared apps.

They are similar enough that people may end up choosing their smart glasses based on Warby Parker, Gentle Monster, or Ray-Ban/Oakley styles, not the AI behind them.

That said, I’ve identified three key differences that could help the Android XR smart glasses stand out and provide significant advantages over what the Meta offers. This does not mean that these glasses will be betteras Meta has three generations of hardware knowledge that we have covered so far. But it proves that longtime Meta glasses wearers should pay close attention.

I won’t get into the Gemini vs. Llama. First, we compare the conversation recognition and accuracy of many methods, which are harder to calculate than to judge how well they write or produce images from the information. Two, both often introduce new AI models, and even if they are more advanced in 2026.

We’ve used the multimodal Gemini Live on phones to keep plants alive and give home improvement advice, so I expect that to carry over to Samsung’s glasses with Gemini always ready – although the Meta has its own Live AI and handles image recognition well.

What I particularly noticed about Gemini, while testing it on Wear OS, is the way Google has integrated commands into its app library, linking them together so you can pull data from one app and easily use it in another. For example, pulling information from Messages or Maps and creating a Keep note from the result.

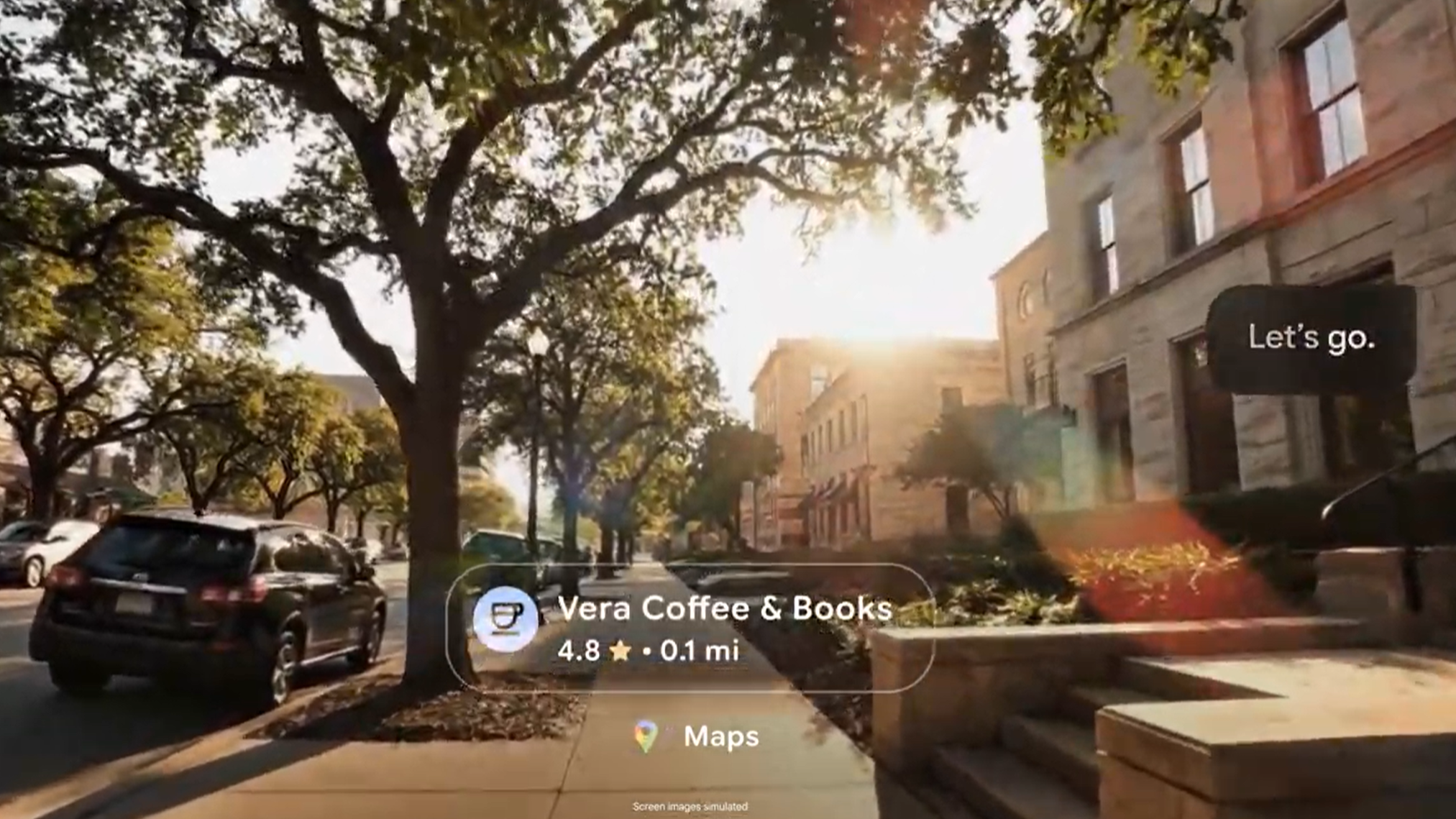

This integration with other apps will allow the HUD to display a preview of your Nest Doorbell feed, Google TV controls, a Gmail subject line pop-up, and more. And they’re not just for Google apps: deep Android integration means anywhere Gemini compatible apps should be compatible. Meta supports the core phone, messaging, music, and social apps, but Google may have an “ecosystem,” at least on Android; iOS users may have a special experience.

Google’s photography technology will be essential

When reviewing the Ray-Ban Gen 2s and Oakley Meta Vanguards, I appreciated the high-action photos captured with such small sensors, and how well they freeze videos, so I seem to be sliding forward rather than shaking and bouncing with each step. A lot of postprocessing goes into making this work.

My expectation is that Google and Samsung will handle computer graphics at least as well as Meta. Both make some of the best camera phones, specializing in areas like motion capture, AI-powered zoom, edge detection with target blur, and more.

Of course, Samsung can’t fit high-quality sensors by zooming into glass frames; “Physics will get in the way,” as Payne explains, especially in bright images. But he thinks they can “step up to that level of quality,” and I’ve seen how, for example, the Pixel 9a produced better photos than other mid-range phones despite its smaller, thinner sensors.

Taking pictures with the Ray-Ban Meta glasses is also a challenge without a viewfinder, so you don’t know how close you are to the pose. But during my demo of the Android XR glasses, they showed the viewfinder function on the synchronized Pixel Watch, so you can check if the angle of your photo looks good at that moment, then take it again or use Gemini to edit it.

Android XR-Wear OS connectivity will be key

The Meta Ray-Ban Display really benefits from having an sEMG band that reads the sensory input of your hand, recognizing gestures without needing to see it on camera. I thought it would be difficult for Google and Samsung to match this feature.

It turns out that Android smartwatches will work with Android XR, and gestures like pinches received by your watch will trigger actions on your screens. It may be one reason why Google added touch support to Pixel Watches this month, so that they are ready to handle this feature in 2026.

Gesture and viewfinder are present confirmed features, but I expect there will be an Android XR app that can add shortcuts, or an option to display Gemini’s responses as text on your wrist for audio glasses only. I would also expect an option to hear your workout stats live over the glasses, similar to how the Oakley Vanguards read Garmin stats.

Basically, because it’s a full-featured app instead of a dedicated input device, there’s more to play between Android glasses and watches. Until that long-rumored Meta Watch appears, the Android XR will have a wearable edge.

Competition is always better

I’m looking forward to the new Samsung glasses, whatever they’re called, next year. I actually wear (non-smart) Warby Parker glasses, and I’m curious if they’ll be able to create designs that look more natural for everyday wear – although I don’t know how well they’ll match Ray-Ban and Oakley sports you look

Ray-Ban & Oakley Meta fans can still stick to the designs and styles they know. The bottom line is that the Meta doesn’t have much competition for smart glasses yet. With Google and Samsung pushing to challenge its dominance, that gives the Meta more impetus to innovate and stay ahead of the curve in sales.